The Plaintext Relay Problem

Why secure systems keep exposing data after the link, and why the next architecture must stop treating plaintext as the price of usefulness.

TL;DR

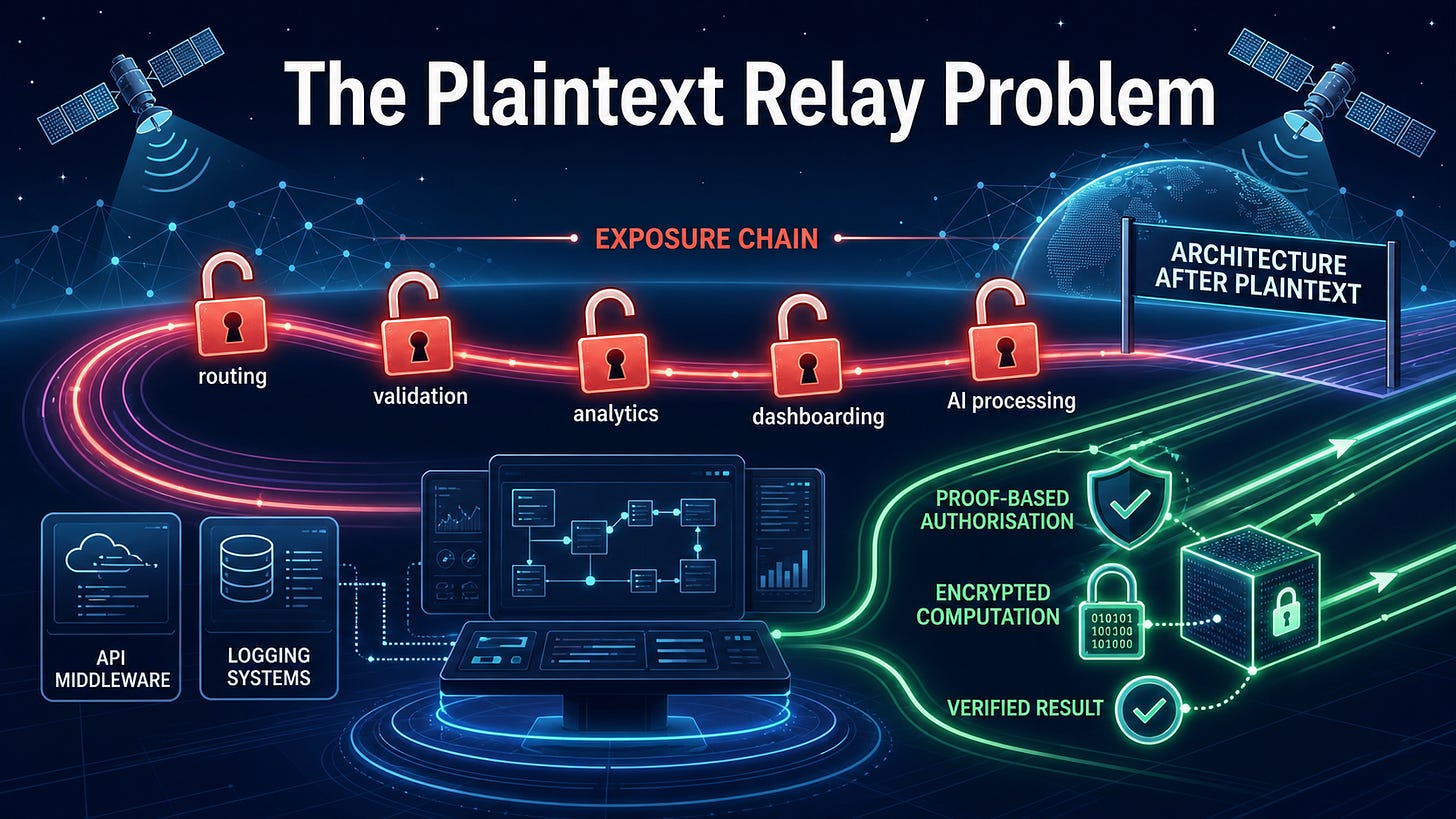

Modern systems rarely expose plaintext just once. Instead, they expose it through a series of ordinary steps: routing, validation, logging, and AI processing. Each step seems reasonable, but together they create an exposure chain. The solution to this isn’t “better” trust; it’s reducing the need for plaintext through encrypted computation and proof-based authorisation.

Exposure isn’t a bug—it’s a feature

We still talk about breaches as if attackers need to break encryption. Usually, they’re just waiting for the system to decrypt the data for them.

Most plaintext exposure doesn’t happen because of a mistake; it happens because the system is doing exactly what it was designed to do. Data is decrypted so it can be routed, enriched, or analysed. It’s turned into plaintext because, in most architectures, usefulness depends on visibility.

This isn’t exotic. It’s standard operating procedure for enterprise, defence, and mission systems. Plaintext doesn’t just “explode” into existence - it leaks into the architecture one “reasonable” exception at a time.

The Relay Effect

The core issue isn’t a single point of decryption; it’s the chain. Every component in a modern stack makes the same promise: “We only decrypt the data briefly.”

But when you look at the workflow, “briefly” happens at every point:

Briefly for routing and validation.

Briefly for analytics and logging.

Briefly for AI processing and partner sharing.

Eventually, “briefly” becomes “repeatedly.” Repeated becomes normal. Normal becomes architecture. Think of it as a relay race where the data is decrypted for use and re-encrypted for transport at every handoff. A workflow full of “temporary” exposure points isn’t a temporary risk - it’s a permanent design flaw.

Case Study: The SatCom Workflow

This is easiest to see in SatCom or defence. The satellite link itself might be a fortress, but the problem starts once the signal lands.

A field asset generates sensitive data.

It’s encrypted for the satellite link.

Gateway: Decrypted for normalisation.

Mission System: Decrypted for fusion with other sources.

Dashboard: Decrypted for the operator.

Partner Sharing: Decrypted for an allied agency.

The attacker doesn’t need to defeat the encryption. They just need to find the place where the system has already defeated it for them.

The Reality: Professional attackers don’t waste time on strong maths. They target the “processing points” downstream: API middleware, logging systems, admin consoles, and AI pipelines.

Why better controls aren’t enough

We’ve spent decades throwing IAM, Zero Trust, and micro-segmentation at this. While necessary, these don’t change the core problem: the workflow still requires plaintext to function.

Better controls might make exposure harder to abuse, but they don’t make it unnecessary. A beautifully governed exposure point is still an exposure point, with a quarterly review cycle.

The Better Question

The traditional security question is: How do we protect plaintext once it appears?

The architectural shift asks: Which parts of this workflow can be redesigned so plaintext doesn’t need to appear at all?

The Architecture After Plaintext

The next generation of security must support workflows where data is used, verified, and acted upon without being seen. This requires:

Proof of identity without oversharing identity data.

Computation on encrypted data where practical.

Verification that processing happened correctly without revealing the raw source.

Where ZKPs and FHE fit

This isn’t science fiction; the tools are maturing:

Zero-Knowledge Proofs (ZKPs): Allow you to prove something is true (like a user’s entitlement) without revealing the data that makes it true.

Fully Homomorphic Encryption (FHE): Allows computation directly on encrypted data. It lets a system produce useful outputs without ever seeing the input.

Proof-Based Execution

Together, these tools point toward Proof-Based Execution. Instead of asking “Who may see this data?”, we ask “What can be verified without exposing it?”

This is vital for cross-domain collaboration. In a coalition environment, Country A and Country B don’t need full access to each other’s raw datasets; they need verified results. Trust doesn’t scale across agencies and jurisdictions, but proof does.

The Bottom Line

The next security model will be built around designing workflows where sensitive data can be processed and acted upon without ever being exposed. That’s the direction PrivID is building toward: a world where we stop the relay race and start securing the data itself.